RAG - Complete Practical Guide

RAG, an LLM upgrade.

Introduction

Retrieval Augmented Generation, is one of the biggest pillars in todays AI field. Mainly used by big companies for better internal gestion and retrieval of documents. In this article I will be explaing some RAG concepts with code snippets for a better grasp, and also be talking about some common problems I faced when implementing my own RAG, and presenting some solutions all along.

What is RAG?

RAG (Retrieval Augmented Generation) is a system design pattern that combines:

- Information retrieval (finding relevant knowledge)

- Large Language Models (LLMs) (generating responses)

Instead of relying only on what the model had learned during training, a RAG system retrieves external knowledge and injects it into the prompt.

Traditional LLM

Question

↓

Model Memory (Training Data)

↓

Answer

Problem:

- knowledge can be outdated

- hallucinations happen

- cannot access private company data

RAG based LLM

Question

↓

Retrieve Relevant Knowledge

↓

Add Context to Prompt

↓

LLM Generates Grounded Answer

This makes answers:

- more accurate

- grounded in documents

- customizable

- domain-specific

Why RAG?

LLMs are powerful but limited.

Common problems:

1. Hallucinations

The model invents facts.

Example:

Question:

Who founded Company X?

Answer:

John Smith.

Even if John Smith never existed.

2. Knowledge Cutoff

Models only know what they were trained on.

They do not automatically know:

- your PDFs

- internal documentation

- GitHub repositories

- recent updates

3. Private Data

Businesses need AI over:

- internal docs

- policies

- tickets

- codebases

RAG solves this.

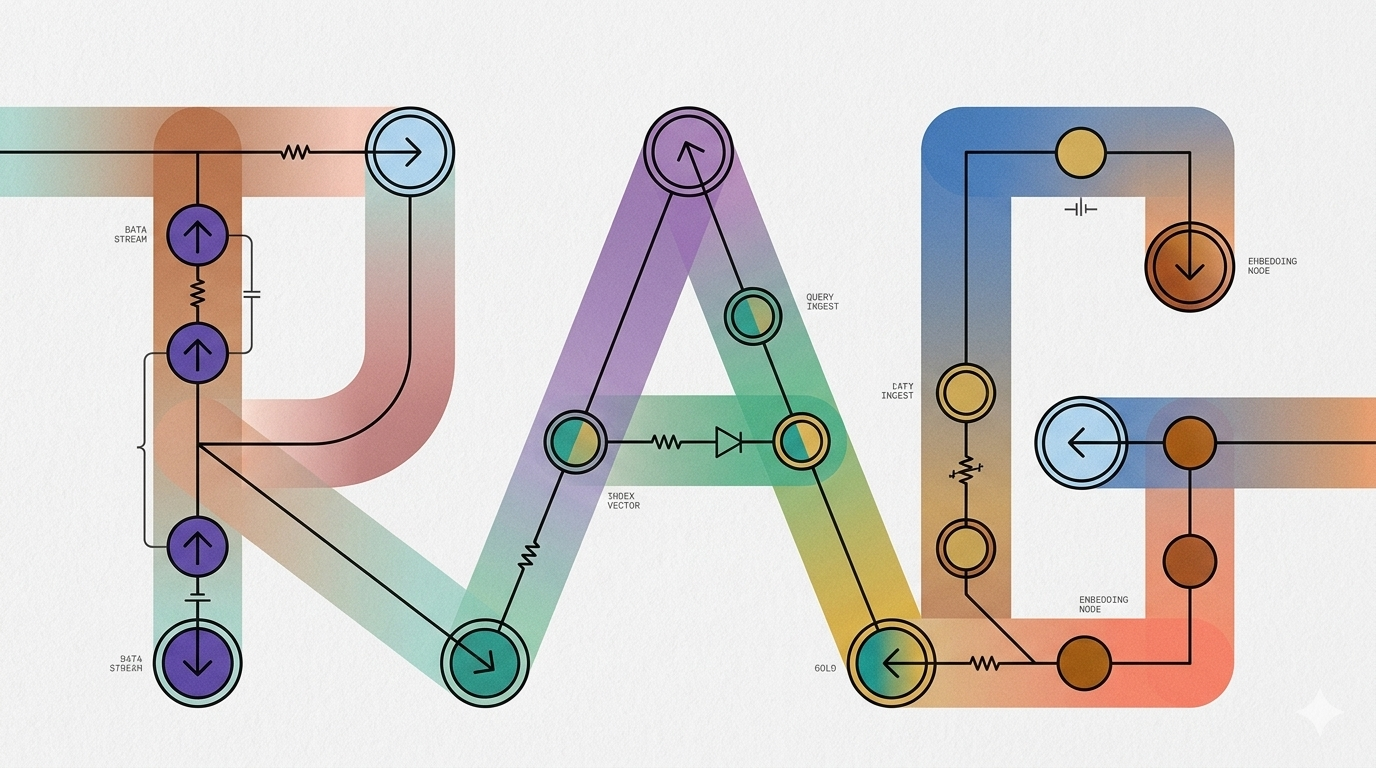

Core Architecture

A RAG system usually contains:

- Documents

- Chunking system

- Embedding model

- Vector database

- Retriever

- Prompt constructor

- LLM

Architecture:

Documents

↓

Chunking

↓

Embeddings

↓

Vector Database

User Question

↓

Question Embedding

↓

Similarity Search

↓

Relevant Chunks

↓

Prompt Construction

↓

LLM

↓

Answer

How RAG Works Step by Step

1. Documents

The system starts with raw documents.

Examples:

- TXT files

- PDFs

- Markdown files

- HTML pages

- GitHub repos

Example text:

RAG systems use vector databases to retrieve

relevant information for LLMs.

2. Chunking

Documents are split into smaller sections.

Why?

Embedding entire books is ineffective.

Instead:

Large Document

↓

Small Chunks

Example:

Chunk 1 → Intro

Chunk 2 → Embeddings

Chunk 3 → Pinecone

3. Embeddings

Every chunk becomes a vector.

Example:

"RAG systems use retrieval"

becomes:

[0.12, -0.77, 0.48, ...]

4. Store in Vector Database

Vectors are stored in:

- Pinecone

- Weaviate

- Qdrant

- Chroma

- FAISS

5. User Question

Example:

What are embeddings?

Question becomes a vector too.

6. Similarity Search

The vector database finds:

Most similar chunks

based on mathematical similarity.

7. Prompt Construction

Retrieved chunks are injected into prompt.

Example:

Context:

Embeddings are vector representations.

Question:

What are embeddings?

8. LLM Generation

The LLM generates an answer using retrieved context.

Key Concepts and Definitions

1. Embedding

A numerical semantic representation of text.

Example:

"Machine learning"

↓

[0.12, -0.34, ...]

Purpose:

- semantic understanding

- similarity search

2. Vector

An ordered list of numbers.

Example:

[0.12, -0.55, 0.91]

3. Dimension

The number of values inside a vector.

Example:

768-dimensional vector

means:

768 numbers

Why it matters:

Your vector DB dimension must match embedding dimension.

Example:

nomic-embed-text → 768

Pinecone index → must be 768

4. Semantic Search

Search by meaning.

Not exact keywords.

Example:

Question:

How does memory work?

Can retrieve:

Agents retain context using memory systems.

5. Similarity Score

Measures closeness between vectors.

Higher score:

More relevant

Top-K

How many results to retrieve.

Example:

top_k=5

Means:

Return best 5 chunks

6. Metadata

Extra information attached to vectors.

Example:

{

"text": "Embeddings are vectors",

"source": "notes.txt",

"topic": "rag"

}

Embeddings Explained

Embeddings convert text into mathematical meaning.

Texts with similar meanings end up close together.

Example:

"How to build AI agents"

and

"Creating autonomous agents"

become nearby vectors.

Generating Embeddings with Ollama

import ollama

def generate_embedding(text):

response = ollama.embeddings(

model="nomic-embed-text",

prompt=text

)

return response["embedding"]

Test:

embedding = generate_embedding(

"What is RAG?"

)

print(len(embedding))

print(embedding[:10])

The code snippets seen above are from a RAG project I implemented, you can view the source code here

Vector Databases

A vector database stores embeddings.

Traditional DB:

Search by exact values

Vector DB:

Search by similarity

Common vector DBs:

- Pinecone

- Qdrant

- Weaviate

- Chroma

- FAISS

Chunking

Chunking is splitting documents.

1. Why Chunking Matters

Bad chunking = bad retrieval.

Example problem:

Chunk 1:

RAG systems use semantic

Chunk 2:

search through vectors

Meaning gets broken.

2. Character-Based Chunking

def chunk_text(text,

chunk_size=800,

overlap=150):

chunks = []

start = 0

while start < len(text):

end = start + chunk_size

chunk = text[start:end]

chunks.append(chunk)

start += chunk_size - overlap

return chunks

3. Overlap

Preserves context.

Example:

Chunk 1 → 0-800

Chunk 2 → 650-1450

Overlap:

150 characters

Similarity Search

Pinecone compares vectors.

Usually using:

Cosine Similarity

Measures angle similarity.

Similar meaning:

High cosine score

Retrieval Pipeline

Example retrieval:

query_embedding = generate_embedding(query)

results = index.query(

vector=query_embedding,

top_k=5,

include_metadata=True

)

Explanation:

vector=query_embedding

Search using question vector.

top_k=5

Retrieve top 5 results.

include_metadata=True

Return original chunk text.

Prompt Augmentation

This is the "augmentation" in RAG.

We inject context.

Example:

context = "\n\n".join(

match["metadata"]["text"]

for match in results["matches"]

)

Prompt Example

prompt = f"""

You are a helpful assistant.

Answer ONLY using the context.

Context:

{context}

Question:

{query}

Answer:

"""

Generation Phase

Send prompt to the LLM.

For me, I used my local LLM Mistral

response = ollama.chat(

model="mistral",

messages=[

{

"role": "user",

"content": prompt

}

]

)

print(response["message"]["content"])

Pinecone Concepts

Below are some Pinecone concepts I used and hope you might find helpful.

1. Index

Container of vectors.

Equivalent to:

Database table

2. Creating Index

from pinecone import Pinecone

pc = Pinecone(api_key=API_KEY)

pc.create_index(

name="rag-demo",

dimension=768,

metric="cosine",

spec={

"serverless": {

"cloud": "aws",

"region": "us-east-1"

}

}

)

3. Upsert

Insert/update vectors.

index.upsert(vectors=vectors)

4. Query

Search vectors.

index.query(...)

5. Delete

Delete vectors.

index.delete(delete_all=True)

Metadata in RAG

Store useful context.

Example:

metadata={

"text": chunk,

"source": "notes.txt",

"section": "embeddings"

}

Useful later for:

- filtering

- citations

- debugging

Best Practices

These are some best practices to follow when building your RAG system:

- Retrieval quality > model quality

- Use metadata

- Keep chunks meaningful

- Avoid tiny chunks

- Re-index after document updates

- Use overlap

- Start simple before frameworks

- Debug retrieval separately from generation

However there is some considerations, as real production RAG systems often add features not present in my personal simple RAG system, such as:

- authentication

- streaming

- caching

- citations

- reranking

- hybrid search

- observability

- evaluation pipelines

- vector versioning

- document syncing

Glossary

| Term | Meaning |

|---|---|

| RAG | Retrieval-Augmented Generation |

| Embedding | Numerical representation of text |

| Vector | Ordered list of numbers |

| Dimension | Number of values in vector |

| Chunk | Small document section |

| Metadata | Extra vector information |

| Top-K | Number of retrieved results |

| Similarity Search | Finding closest vectors |

| Cosine Similarity | Vector closeness metric |

| Index | Pinecone vector collection |

| Upsert | Insert/update vector |

| Retrieval | Finding relevant knowledge |

| Generation | Producing final answer |

| Hallucination | Fabricated answer |

| Reranking | Reordering retrieved chunks |

| Hybrid Search | Semantic + keyword retrieval |

Conclusion

Dear reader, I hope my POV of RAGs helped you even a little bit to understand how these systems work under the hood from embedding to retrieving to generating the proper response. And this is the essence of a RAG system.